Artificial Intelligence (AI) is a rapidly growing field that is transforming the way we live and work. One of the key components of AI is the feedback loop, which allows machines to learn and improve over time. In this blog post, we'll explore what the AI feedback loop is, how it works, and its importance in the development of AI.

What is an AI Feedback Loop?

An AI feedback loop is a process by which an AI system receives feedback on its performance, uses that feedback to improve its performance, and then receives more feedback. This process is repeated over and over again, allowing the AI system to continuously learn and improve.

The feedback can come from a variety of sources, such as human input, data analysis, or other AI systems. The AI system then uses this feedback to adjust its algorithms and improve its performance.

How does the AI Feedback Loop work?

The AI feedback loop works in several stages. First, the AI system receives input from its environment, such as data from sensors or human input. The system then processes this input using its algorithms to produce an output.

Next, the system receives feedback on its output, which can come from a variety of sources. For example, if the AI system is a chatbot, it might receive feedback from users on how well it is able to understand and respond to their queries.

The AI system then uses this feedback to adjust its algorithms and improve its performance. For example, if the chatbot is having trouble understanding certain types of queries, it might adjust its algorithms to better recognize those types of queries in the future.

This process is repeated over and over again, with the AI system continuously receiving feedback and adjusting its algorithms to improve its performance.

Why is the AI Feedback Loop important?

The AI feedback loop is important because it allows AI systems to continuously learn and improve over time. Without the feedback loop, AI systems would not be able to adapt to changing environments or improve their performance.

For example, imagine a self-driving car that does not have a feedback loop. The car might be able to drive on a clear, sunny day, but it would not be able to adapt to changing weather conditions or road conditions. With the feedback loop, the car can continuously learn and improve its performance, allowing it to safely navigate a variety of environments.

In addition to improving performance, the feedback loop also allows AI systems to become more efficient. By continuously learning and improving, AI systems can become better at performing tasks with less input or processing power.

Types of feedback in the AI Feedback Loop

Feedback is an essential component of the AI feedback loop, and there are several types of feedback that an AI system can receive.

1. Supervised feedback: This type of feedback involves human input, where a person provides labeled data to the AI system. The AI system then uses this labeled data to learn and improve its performance.

For example, in image recognition tasks, a human might provide labels for different images (e.g., "cat", "dog", "bird"). The AI system can then use this labeled data to learn how to correctly identify different objects in images.

2. Unsupervised feedback: This type of feedback does not involve any human input or labeling. Instead, the AI system analyzes data on its own and identifies patterns or similarities between different pieces of data.

For example, an unsupervised learning algorithm might analyze a large dataset of customer purchasing habits and identify groups or clusters of customers who have similar buying patterns.

3. Reinforcement feedback: This type of feedback involves rewarding an AI system when it performs well and penalizing it when it performs poorly.

For example, in a game-playing scenario, an AI agent might receive a reward when it successfully completes a level or defeats an opponent. Conversely, it might receive a penalty if it fails to complete a level or loses to an opponent.

Each type of feedback has its strengths and weaknesses, and the choice of which type to use depends on the specific task at hand. However, regardless of the type used, all forms of feedback play a crucial role in helping AI systems learn and improve over time through the continuous process that is the feedback loop.

Challenges in Implementing the AI Feedback Loop

While the AI feedback loop is an essential component of AI development, it is not without its challenges.

Quality & Quantity of data

One of the primary challenges in implementing the feedback loop is the quality and quantity of data.

For an AI system to learn and improve, it needs a large amount of high-quality data. However, obtaining this data can be difficult, particularly for industries with limited access to data or where privacy concerns restrict the use of personal information.

Accurate and unbiased

Another challenge is ensuring that the feedback received by the AI system is accurate and unbiased. If the feedback is inaccurate or biased, it could lead to incorrect adjustments to algorithms, which would result in poor performance.

Technical Challenges

There are also technical challenges involved in implementing the feedback loop. For example, some AI systems may require significant computational resources to process large amounts of data quickly enough to provide timely feedback.

Ethical Considerations

Finally, there are ethical considerations involved in implementing the feedback loop. As AI systems become more sophisticated and powerful, there is a risk that they could be used to make decisions that significantly impact people's lives. It is important to ensure that these systems are developed ethically and with appropriate oversight to prevent unintended consequences.

Despite these challenges, however, the importance of the AI feedback loop cannot be overstated. By continuously learning and improving over time, AI systems have tremendous potential to transform our world for the better.

How to optimize the AI Feedback Loop for better performance?

Optimizing the AI feedback loop is crucial for achieving better performance from AI systems. Here are some strategies that can be used to optimize the feedback loop:

1. Increase the Quantity and Quality of Data

As mentioned earlier, having a large amount of high-quality data is essential for an AI system to learn and improve over time. By increasing the quantity and quality of data, AI systems can gain a deeper understanding of their environment and make more accurate predictions.

One way to increase the quantity of data is by using data augmentation techniques, such as rotating or flipping images in computer vision tasks. Another way is leveraging transfer learning, where pre-trained models are used as a starting point for training new models.

To improve the quality of data, it's important to ensure that the data is representative of the real-world scenarios that the AI system will encounter. This can be achieved by collecting data from diverse sources and ensuring that it covers a wide range of possible scenarios.

2. Use Reinforcement Learning Techniques

Reinforcement learning techniques can be used to optimize the feedback loop by providing more targeted feedback to an AI system. In reinforcement learning, an agent receives rewards or penalties based on their actions in an environment.

By using reinforcement learning techniques, an AI system can learn which actions result in positive outcomes and which ones result in adverse effects. This allows it to adjust its algorithms more effectively than with unsupervised or supervised learning alone.

3. Continuously Monitor and Evaluate Performance

To optimize the feedback loop, it's important to continuously monitor and evaluate an AI system's performance. This involves setting up metrics to measure performance, such as accuracy or precision, and regularly reviewing these metrics to identify areas where improvement is needed.

By monitoring performance closely, it's possible to detect issues early on and make adjustments before they become major problems. It also allows for continuous improvement as new data becomes available or as environmental conditions change.

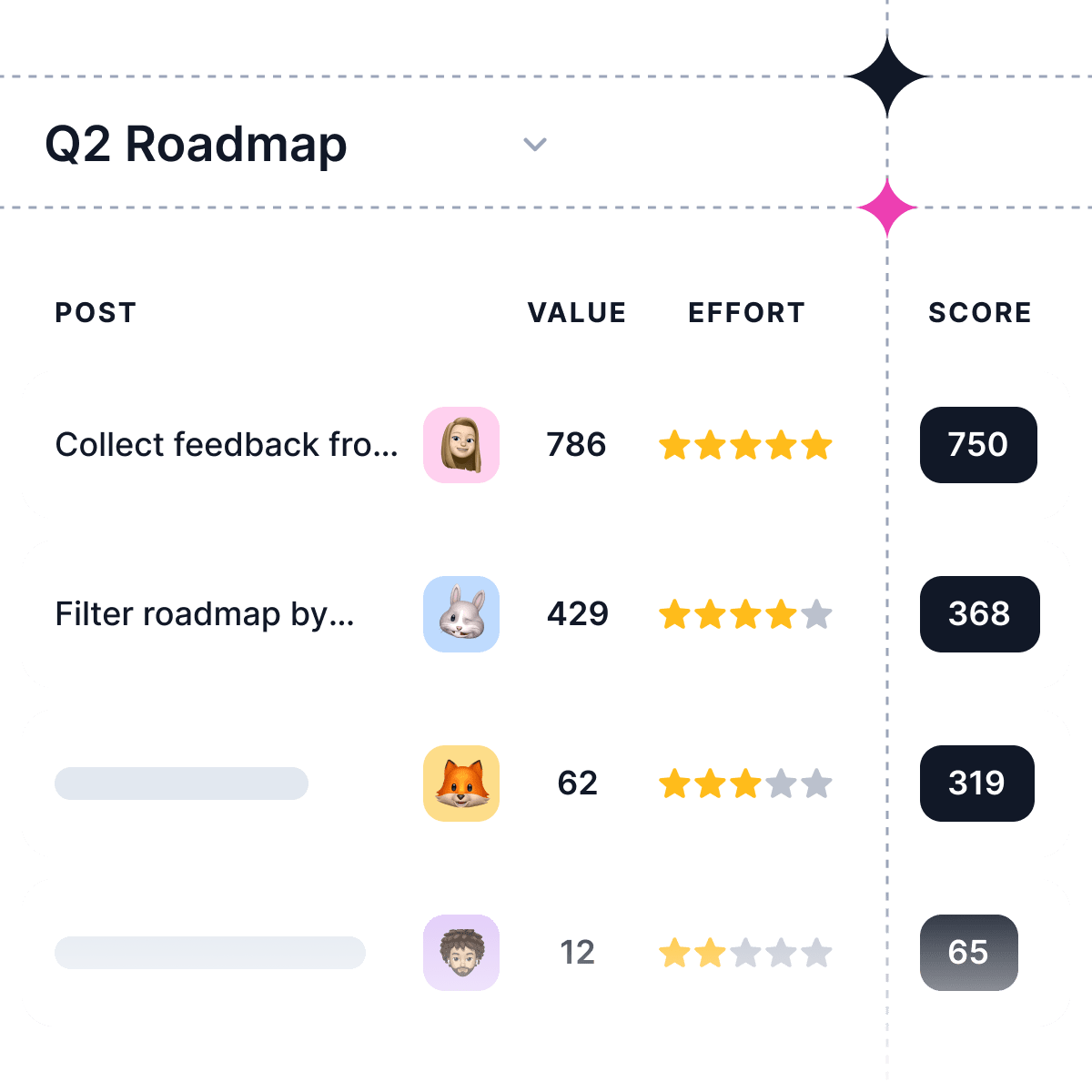

4. Incorporate Human Feedback

Finally, incorporating human feedback into the feedback loop can help optimize an AI system's performance further. Human input can provide valuable insights into how well an AI system is performing in real-world scenarios and identify areas where improvement is needed.

For example, if an AI chatbot is not responding appropriately to certain types of queries from users, human feedback can help identify these issues so that they can be addressed more effectively.

Incorporating human feedback also helps ensure that ethical considerations are taken into account when developing AI systems. By involving people in the development process, it's possible to create systems that are fairer, more transparent, and less likely to have unintended consequences.

Overall, optimizing the feedback loop is essential for achieving better performance from AI systems. By increasing the quantity and quality of data, using reinforcement learning techniques, continuously monitoring performance, and incorporating human feedback; we can create smarter machines with greater potential for transforming our world positively.

What are the limitations and potential risks of the AI Feedback Loop?

While the AI feedback loop is a powerful tool for improving the performance of AI systems, it also has limitations and can pose potential risks.

Limitations

One limitation of the AI feedback loop is that it relies on the quality of data available. If the data used to train an AI system is biased or incomplete, then the resulting algorithms will be similarly flawed. This can lead to biased decision-making and poor performance in real-world scenarios.

Another limitation is that certain tasks may not lend themselves well to feedback-based learning. For example, some complex tasks require a high degree of creativity or intuition, which are difficult to quantify and provide feedback on.

Finally, there may be situations where obtaining feedback is difficult or impossible. For example, in cases where an AI system operates in an environment where there are no clear metrics for success or failure (such as with creative tasks), it may be challenging to provide meaningful feedback.

Potential Risks

In addition to these limitations, there are also potential risks associated with the use of the AI feedback loop. One risk is that an AI system may become over-reliant on its training data and fail to adapt when presented with novel scenarios. This could result in poor decision-making or even dangerous outcomes if the system fails to recognize unexpected inputs.

Another risk is that an AI system may become too good at performing specific tasks but lack general intelligence. In such cases, the system may be unable to apply its knowledge in new contexts or make decisions outside of its narrow area of expertise.

Finally, there are ethical risks associated with using feedback-based learning approaches in AI systems. For example, if a chatbot receives biased input from users (such as discriminatory language), it could learn these biases and perpetuate them in future interactions. Similarly, if an autonomous vehicle receives reinforcement signals based on aggressive driving behavior (such as speeding), it could learn these behaviors and put passengers and other drivers at risk.

It's important for developers and users of AI systems to be aware of these limitations and potential risks when designing and implementing feedback loops. By mitigating these risks - such as ensuring diverse training data sources or incorporating human oversight into decision-making processes - we can help ensure that AI systems are developed ethically and responsibly for everyone's benefit.